The Introduction

Picture an infrastructure with loosely coupled micro services, in a docker swarm environment. The services have REST end points and we use Spring Boot to realise these services.

Looking at the memory usage of these services however, being around 400MB each, they hardly qualify as ‘micro’ services.

This led to an investigation into the memory usage of Spring Boot. And a search for possible alternatives for Spring Boot.

The memory usage of spring is described by Dave Syer here, and here for Spring Boot in docker. A comparison of the various REST frameworks can be found here, but most comparisons don’t specifically look at memory usage.

So I decided to take 6 popular java frameworks with a REST feature, set up a simple “Hello World” endpoint on them and measure the memory usage with a small load on the endpoint.

That way the memory usage of the framework itself can easily be measured.

I picked Spring Boot (obviously), DropWizard, Play, Vertx, Spark (Java) and Jersey (JAX-RS reference implementation) running on Tomcat 8.

The Setup

All the apps have a minimum setup, following the guidelines of the framework. They all have a simple get endpoint returning a string. For example for SpringBoot:

@RestController

public class HelloController {

@GetMapping(value = "hello")

public String hello() {

return "hello-world";

}

}and for Spark :

public static void main(String[] args) throws UnknownHostException {

get("/hello", (req, res) -> "Hello World");

}

which is probably the shortest java definition of the service of all frameworks.

Then a runnable jar is made which is done with maven-shade plugin for Spark, Vertex and Dropwizard, with the spring-boot maven plugin for Spring Boot.

Then the docker file to make the docker image:

FROM openjdk:8-jdk COPY ./target/minimal-boot-1.jar /usr/app/boot.jar WORKDIR /usr/app HEALTHCHECK --interval=5s CMD curl -f http://localhost:8080/hello || exit 1 CMD java -XX:NativeMemoryTracking=summary -jar boot.jar

Here we use the docker health check with curl to check the service running correctly and to give a steady load, calling the service every 5 seconds.

The NativeMemoryTracking option is added to inspect the memory usage of this container in more detail (see results).

For Play an existing docker image on docker hub is used and extended, because of the hassle with nbt to make a runnable jar.

For Tomcat an existing docker image is used and extended adding curl for the health check and the generated war of the application:

FROM tomcat:9.0-jre8-alpine RUN apk add --update curl && rm -rf /var/cache/apk/* COPY target/minimal-jersey-1.war /usr/local/tomcat/webapps/jersey.war HEALTHCHECK --interval=5s CMD curl -f http://localhost:8080/jersey/hello/ || exit 1 CMD ["catalina.sh", "run"]

Then a compose file is used to start it all up :

version: '3.1'

services:

springboot:

image: boot

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"

play:

image: play

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"

dropwizard:

image: drop

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"

vertx:

image: vertx

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"

spark:

image: spark

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"

jersey:

image: jersey

environment:

- "_JAVA_OPTIONS=${JAVA_OPTIONS}"Here the JAVA_OPTIONS parameter is used to give all apps the same heap space properties,e.g.:

export JAVA_OPTIONS="-Xmx500m -Xms50m" docker-compose up -d

The Results

For memory usage insights look at docker stats. When running the applications without heap constraints on a 2GB docker environment you’ll find something like this after a few minutes:

NAME CPU % MEM USAGE / LIMIT MEM % NET I/O BLOCK I/O PIDS rest_springboot_1 2.83% 248.3MiB / 1.952GiB 12.42% 1.53kB / 0B 21.3MB / 0B 33 rest_spark_1 2.67% 31.96MiB / 1.952GiB 1.60% 1.71kB / 0B 12.5MB / 0B 27 rest_vertx_1 2.70% 47.8MiB / 1.952GiB 2.39% 1.78kB / 0B 22.4MB / 0B 21 rest_jersey_1 0.14% 129.7MiB / 1.952GiB 6.49% 1.71kB / 0B 25.3MB / 0B 49 rest_play_1 4.16% 176.5MiB / 1.952GiB 8.83% 1.46kB / 0B 29.6MB / 32.8kB 33 rest_dropwizard_1 3.19% 139.2MiB / 1.952GiB 6.97% 1.78kB / 0B 16.7MB / 0B 35

You discover immediatly that Spring has the most memory usage, while Spark uses the least.

To analyse the effect of the heap size we do the same measurement with fixed heap size (-Xms and Xmx = 50M), and get the following results :

NAME CPU % MEM USAGE / LIMIT MEM % NET I/O BLOCK I/O PIDS rest_springboot_1 0.16% 151MiB / 1.952GiB 7.56% 1.65kB / 0B 0B / 0B 33 rest_spark_1 0.15% 35.68MiB / 1.952GiB 1.78% 1.65kB / 0B 0B / 0B 27 rest_vertx_1 0.10% 50.94MiB / 1.952GiB 2.55% 1.73kB / 0B 0B / 0B 20 rest_jersey_1 0.17% 110.8MiB / 1.952GiB 5.55% 1.73kB / 0B 0B / 0B 49 rest_play_1 1.10% 88.76MiB / 1.952GiB 4.44% 1.65kB / 0B 0B / 32.8kB 33 rest_dropwizard_1 0.17% 127.3MiB / 1.952GiB 6.37% 1.82kB / 0B 12.3kB / 0B 34

Now we see Spring Boot still using a large amount of memory, far more than the heap space usage. While Spark and Vertx use very little of the heap and have a very small memory footprint.

To analyse this large memory usage of Spring we use the native memory tracking java tool:

docker exec rest_springboot_1 jcmd 6 VM.native_memory summary

giving:

Total: reserved=1443704KB, committed=158176KB - Java Heap (reserved=51200KB, committed=51200KB) (mmap: reserved=51200KB, committed=51200KB) - Class (reserved=1083313KB, committed=36657KB) (classes #5470) (malloc=6065KB #6169) (mmap: reserved=1077248KB, committed=30592KB) - Thread (reserved=33037KB, committed=33037KB) (thread #33) (stack: reserved=32896KB, committed=32896KB) (malloc=103KB #166) (arena=38KB #64) - Code (reserved=251589KB, committed=12717KB) (malloc=1989KB #3475) (mmap: reserved=249600KB, committed=10728KB) - GC (reserved=7648KB, committed=7648KB) (malloc=5772KB #159) (mmap: reserved=1876KB, committed=1876KB) - Compiler (reserved=145KB, committed=145KB) (malloc=14KB #152) (arena=131KB #3) - Internal (reserved=6461KB, committed=6461KB) (malloc=6429KB #7119) (mmap: reserved=32KB, committed=32KB) - Symbol (reserved=8969KB, committed=8969KB) (malloc=6212KB #56128) (arena=2757KB #1) - Native Memory Tracking (reserved=1156KB, committed=1156KB) (malloc=6KB #74) (tracking overhead=1150KB) - Arena Chunk (reserved=186KB, committed=186KB) (malloc=186KB)

Looking at the committed memory we see the spring has loaded a lot of classes (5470, using 36MB) and lots of space for threads (33MB).

Also the code section (generated code by cglib etc.) is contributing with 12MB. This the effect of the large library of the spring components used by springboot.

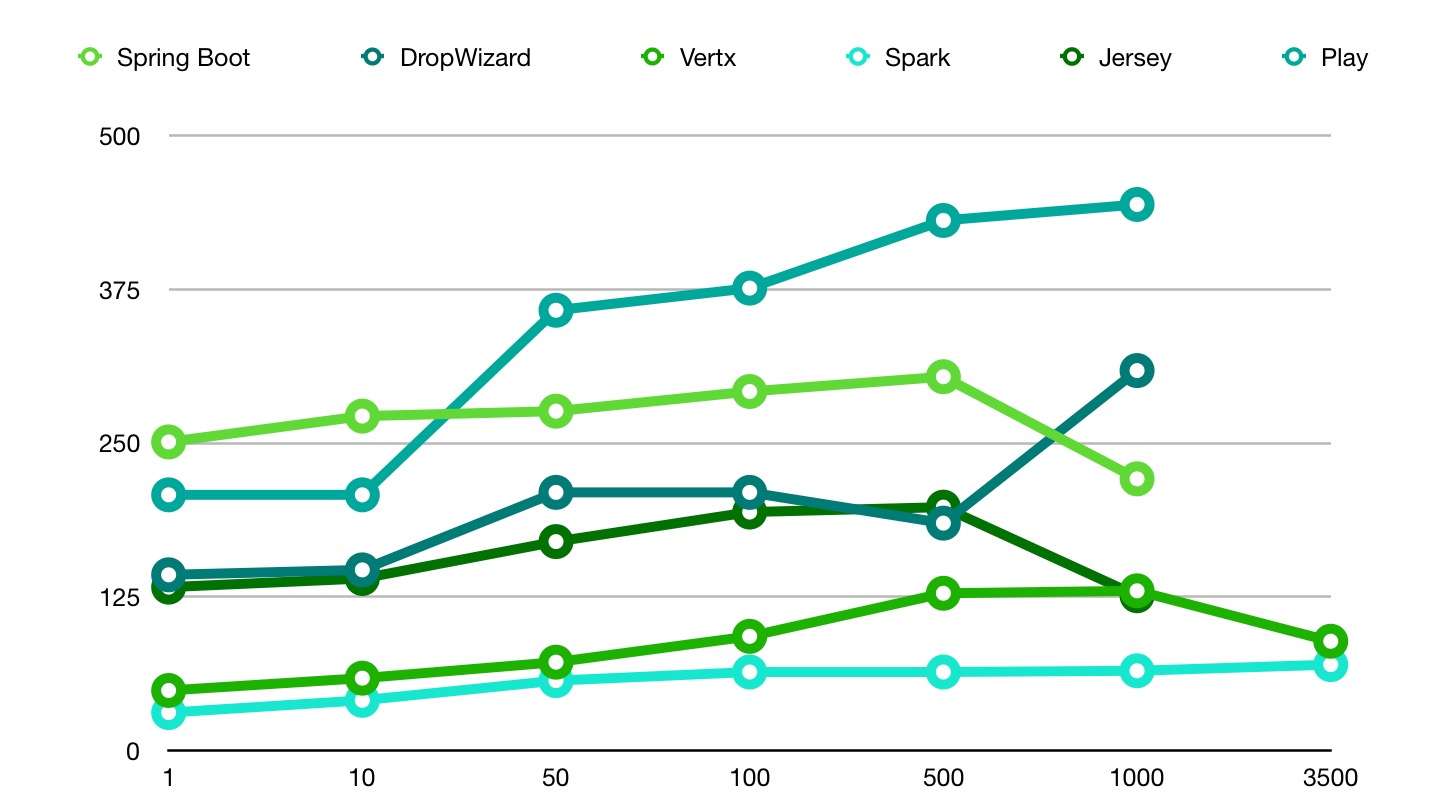

We also analysed the effect of a greater payload. We varied the payload from 1 per second to the maximum payload the CPU usage of the component would allow in our setup. This was mostly around 1000 request per second. For each measurement we waited for the memory usage to be constant, which sometimes decreased due to garbage collection. In the result we see that Vertx and Spark stayed constant a a low memory usage. Also is seen that Play’s memory usage is increasing significant due to the greater payload.

In Conclusion

If a real micro service with a small memory footprint is what you are looking for Spark and Vertx are the best candidates of the 6. Spring Boot proved to be the worst.

When I saw the company name (Craftsman) I hoped you would pay less attention to tools and frameworks and pay more attention to (programming) techniques and design principles like TDD, BDD, DRY, SOLID, DDD, etc.

Nice experiment! I’m curious to learn how memory usage increases when load increases did you test that? These frameworks probably scale differently.

I will try that shortly. Will post the results here.

See the blog !

Also have a look at this article for more details on Spring Boot memory usage: https://spring.io/blog/2015/12/10/spring-boot-memory-performance

Hey, just leaving constructive feedback, next time you use a graph similar to the one above its probably worth sacrificing some design for usability and use different colors instead of 6 different shades of grey.

Thanks! We will take your feedback in mind.

Nice blog, we are also using spring boot for out services, and find the memory usage large even when the services are basic.

Please include http4s

Move on to golang! guys!.

microservices of 10mb and 20 millicores of CPU!.

Great article. We have been experiencing exactly the memory problem in our Spring boot applications. Maybe Spark is the way to go in our case. Greetings.

Next time you do this benchmark please include light4j framework (its more like platform for event driven system with independent modules to be used as standalone)

https://github.com/networknt/microservices-framework-benchmark

would you please change the color diagram above conclusion section ?

it’s so hard to tell

You may want to include micronaut for the comparisson…

Nice article, thank you.

We live in a time were where we are charged per byte. That’s how these cloud providers operate.

Of course it is important to take good design practices into consideration, but as craftsmen we also have to take the resource usage into account. These costs keep on lingering indefinitely.